Deep Learning summary for 2017: Reinforced Learning and Miscellaneous apps

-

4454

-

0

-

0

-

0

In the third part of our Deep Learning summary for 2017, we discuss the new discoveries and breakthroughs in reinforced learning and other fields.

Reinforced Learning or RL is also on the rise. This term refers to training an agent in an interactive and rewarding environment, essentially giving them experience, just like humans gain knowledge in the real life. The famous AlphaGo from Google DeepMind, a company we mentioned quite a lot in the previous article is a bright example of such training: the bot analyzed a huge number of games and played versus itself to advance. 2017 brought even more impressive results.

Reinforced learning with unsupervised auxiliary tasks

Another example of RL using a game is VizDOOM. However, uninterrupted RL takes quite a lot of time, so accelerating it using various auxiliary tasks is a highly lucrative and hot topic. Google DeepMind has published an article showcasing the consequences of adding some additional losses (auxiliary tasks like pixel control) to make the training rewarding. This speeds up the process significantly.

Robot training using Deep Learning virtual environments

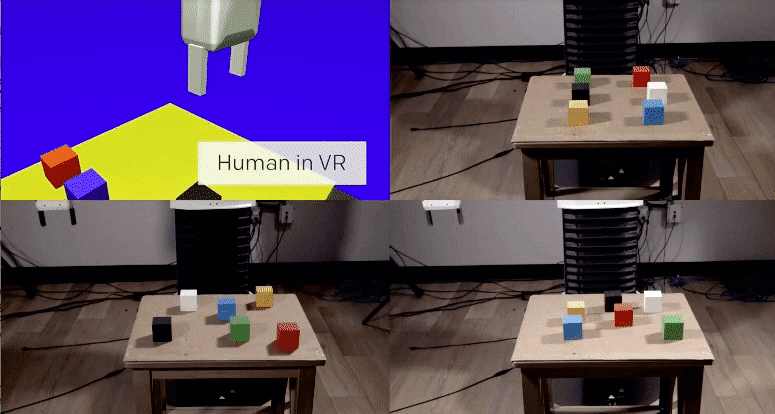

OpenAI team has published a research on one-shot learning, which proves: even one virtual reality simulation is enough to train the robot to perform a task. People are not so smart…

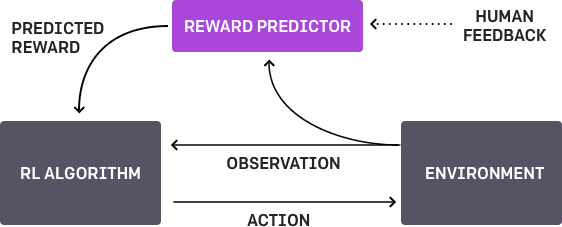

RL using human feedback

Yet another research from Google DeepMind and OpenAI describes the results of reinforced learning imbued with human feedback. The algorithm had a task and presented two variants of the solution to a human, who chose the optimal variant. The algorithm was trained to solve the task quite rapidly.

Robot movement programming

We reckon you all were following the progress of the adorable Atlas from Boston Dynamics? The robot is rapidly mastering even the most complicated forms of movement as is now even capable of doing backflips!

This progress is based on the software that teaches the robot to walk based on a simple function that uses the rewards for teaching the robot to make the correct movements. Google Deepmind researchers published a paper on producing flexible behaviors in simulated environments, describing the way the agent (a humanoid figure) chose the correct route through the course of obstacles. This is what the training process should have looked like from the algorithm’s point of view:

OpenAI has kindly shared these algorithms on their GitHub repo, so you can train your agent too!

Miscellaneous achievements

There are quite a few impressive practical applications of Deep Learning algorithms in real-world businesses. We will list some of them below.

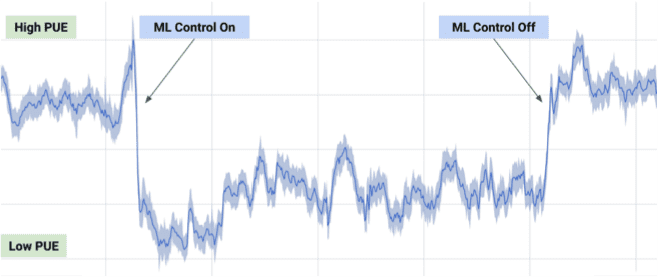

Datacenter cooling

Google also used some of DeepMind algorithms to significantly lower their data center power usage by leveraging data from thousands of sensors in their data centers to optimize the cooling process.

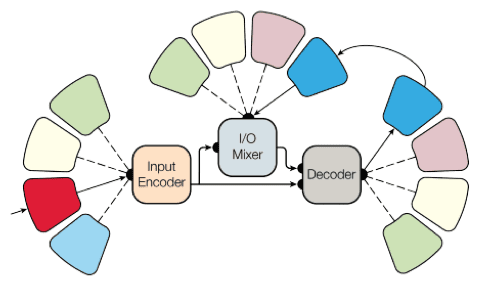

A universal DL model

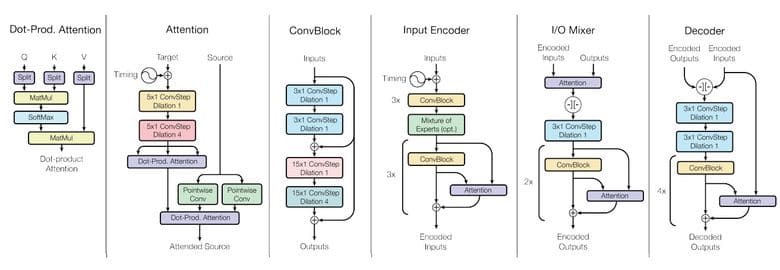

Until recently every ML model had to be trained/retrained to solve a specific task. Google Brain team made a significant progress in creating a universal model that is capable of solving 8 tasks at once! The network uses multiple decoders and encoders and switches them depending on the task at hand:

Due to using such approach, the models become quite well suited for solving multiple tasks, the blocks to not interfere and sometimes might even help each other, and the performance is great for both parsing small and huge datasets.

Get the code from tensor2tensor and play with it yourself!

Imagenet training in 1 hour

FB research group has published the results of their experiment, allowing to increase the efficiency of Imagenet training by 90%. They did have to use a HUGE cluster of 256 CPUs for the task, yet this model now allows Facebook to experiment with even better conditions!

Self-driving cars are ever closer

Google is launching a pilot project in Phoenix, and their self-driving cars have completed more than 3,000,000 kilometers across the US. As self-driving cars are now allowed in all of the US states, the future is ever closer.

Healthcare

As we have already told in the first article of the series on Deep Learning summary for 2017, Google Deepmind is actively working to introduce their DL algorithms into the healthcare industry, for fighting cancer in particular. They have already created a separate department, DeepMind Health for this purpose.

Conclusions

Remember — in quite a near future knowledge an experience with Machine Learning systems will be as expected of an IT specialist as the ability to handle databases today. Be prepared — this brave new world of self-driving cars, image-recognizing robots, fake TV interviews with the President and computer-synthesized music is near!

2017 was a very fruitful year for Deep Learning. Multiple projects in the fields of text and speech recognition, decoding and generating audio, training machine perception and improving reinforced learning mechanisms — all of this happened within one year, and much more is sure to come!

We are thrilled to witness this rapid progress and have engaged in quite a few of Deep Learning projects ourselves. Stay put, more great news are surely on the way, and should you have any inquiries – feel free to contact us at once! If you found this summary useful — share it with your friends!

Source: Habrahabr.ru