IT Svit deployment evolution — from 3 hours to 2 minutes

-

2773

-

0

-

0

-

0

One of IT Svit products is Hurma — an integral HR & recruiting system we developed from scratch. This is the story of how we reduced its deployment time from 3 hours to 2 minutes.

Hurma is built using Laravel framework and is running in a Kubernetes cluster. The system is being actively developed and new users are joining rapidly, so releasing a new version, fixing bugs or adding new features within short periods is crucial.

Early stage: 3 hours of manual deployment

While the system was initially developed, it was mostly deployed manually and the deployment took around 3 hours. This time was needed for the following actions:

- Building a Docker image of all the needed runtime environment

- Adjusting Kubernetes manifests for infrastructure provisioning

- Uploading assets to the cluster

- Updating dependencies

- Testing & debugging the application

While the timing of the process above sufficed for the development purposes, it was clearly inadequate for commercial use.

Trial environment: 30 minutes by a pipeline

When the system entered the beta-testing stage, launching around 10 instances at once was needed. Therefore, we went for certain automation and created a CI/CD pipeline:

- Prepare a unified Docker image

- Prepare the Kubernetes manifest templates

- Create the Jenkins Pipeline

Due to unification, templating and automation, the deployment time shortened from 3 hours to 30-45 minutes. We were able to easily setup around 10 instances per day to provide access to our trial environments.

Release stage: new environment launch in 10 minutes

After the release of Hurma system in September 2018, the numbers of active instances grow rapidly — as it is a really great all-around capable HR & recruiting tool for any business. We started to look for further ways of shortening the product configuration times. We decided to pre-download the “vendors” (the external libraries and dependencies used in the application). Instead of downloading them for each environment separately, they are now pre-stored in a form of a backup file and rapidly utilized during the environment setup. Thus said, we shortened the deployment time to 10 minutes at the most.

Production stage: updates take 2 minutes only

As the system is being actively developed, large numbers of changes are added, and new smaller releases become more frequent. Changes often bring new features to the database too and applying them in the proper order became the next bottleneck.

E.g. if we have environments with v0.3, v0.4, v0.5 we can’t use the same procedure to update to v0.6 for each of them. This is due to the fact that updating from v0.3 requires updating to v0.4 and v0.5 prior to updating to v0.6 while updating from v0.5 to v0.6 can happen directly.

The solution we developed was inserting the seed operations into migrations. Thus said, we received a unified approach to database upgrades and the Laravel framework now takes care of sequential upgrade from any previous version to the desired automatically. It shortened the deployment time even further, so a single environment is now updated within 2 minutes.

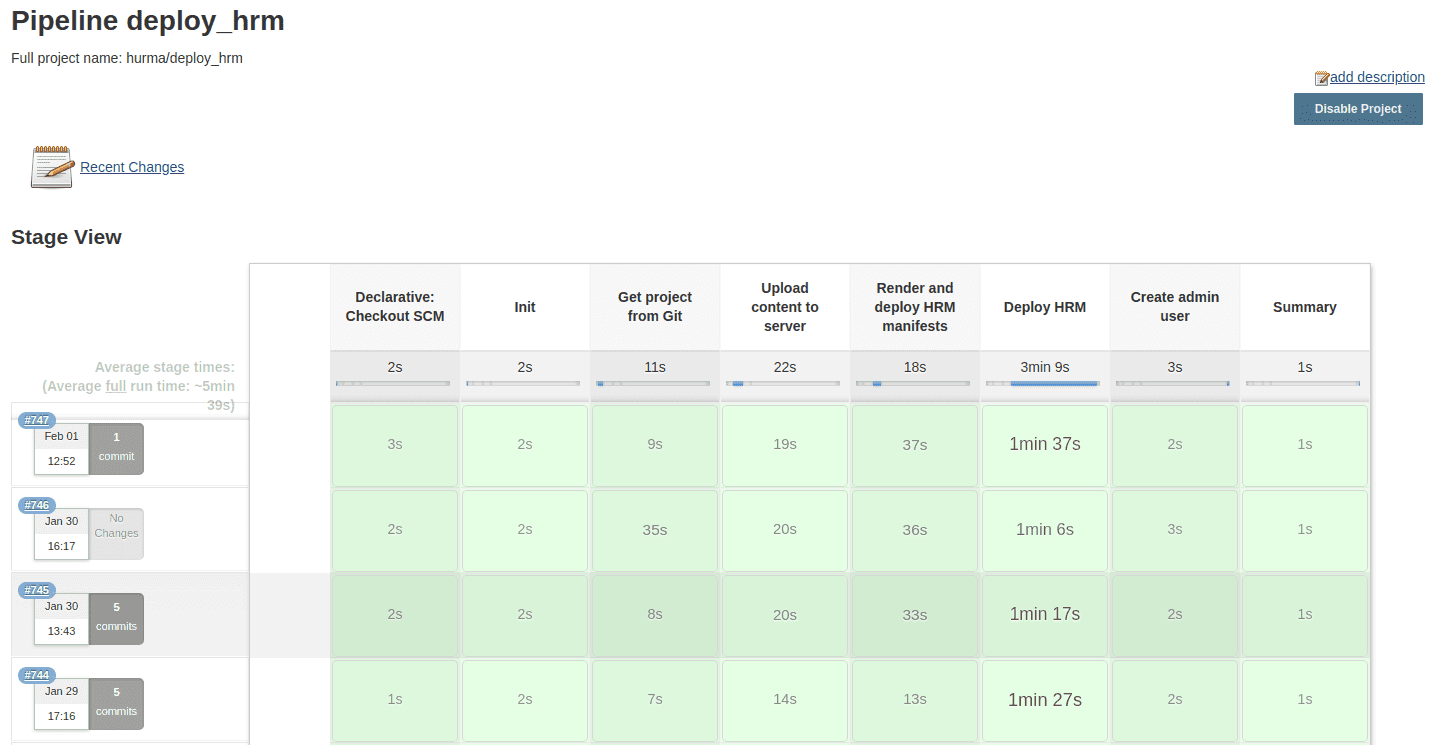

No-hustle updates: no human intervention

Update process of many similar environments is usually boring. It requires you to focus to put proper values for each environment and repeat it many times. It looks normal when you have 1-10 environments, but when you have 50-100, it becomes torture.

We mitigated manual actions by creating a parameterized Jenkins Pipeline that updates environments in parallel. As the update parameters are similar to all environments – we put them into a “settings” file, that represents “release” state and is taken by Jenkins.

Now all is necessary is to commit the new “release” state and select the required environments within Jenkins Pipeline parameter, and the rest happens automatically.

TO-DO: Logs processing

We still need to implement centralized logs processing to enable usage statistics and better analysis of priorities of the further product development. However, extracting value from a flow of logs is quite complicated, so we decided to use Sentry — an error-tracking software for simple exception analysis. After being integrated to the exception handling algorithm of our project as a Laravel-compliant API, it provides a convenient dashboard for logs analysis and error discovery. As a result, the monitoring becomes much simpler.

Future plans: immutable infrastructure and Helm charts

We are now working on implementing the immutable infrastructure for Hurma system. We will build every core functionality into a single Docker image. The only thing left outside would be the dynamic content like avatars, media files documents, etc. This will greatly simplify and speed up the deployment and updates of new environments.

We are also working on introducing Helm charts. This will enable the next step in templating, as the process of Kubernetes cluster configuration and management will become much easier.

How many environments can 1 server host?

It depends on server capacity. Currently, 1 our production server hosts around 5 environments. But we’re going to change it in the future by adding a robust Kubernetes storage engine. Using this we won’t take manual care of how many environments will be on a single server. Instead, we’ll let the Kubernetes controller to evenly distribute the workload.

How to ensure optimal system performance?

- Correct resources planning and allocation. When you know the system requirements for the component, i.e. component requires 2 vCPU and 8GB RAM, it makes no sense to allow it to use only 0.5vCPU and 2GB of RAM. It won’t work optimally, even if it would work at all.

- Processes isolation. Using Docker adds it to the system. We can make sure that process failure within one container won’t affect the other container.

- Redundancy and Load Balancing. It always makes sense to distribute load across several instances of components. It not only affects performance positively but results in better user experience due to higher uptime.

Final thoughts on IT Svit Hurma deployment evolution

We have come a long way from a manual project configuration for 3 hours to a fully-automated one that takes 5 minutes for the completely fresh environment setup (2 minutes for an update). We are now able to apply this experience to other projects, further reducing the time-to-market for various products from our customers.

We also understand the way to improve our deployment pipeline even further and will follow it to deliver ever-improving results. This culture of constant self-development and finding new ways to improve and innovate is one of the things that help us remain amongst top 10 Managed Services Providers worldwide, according to Clutch.

Should you like to benefit from applying IT Svit DevOps expertise for your products and services — let us know, we are always ready to help!